Migrating an event pipeline from NATS to Kafka with zero downtime

Wallarm (YC S16) is a series C cybersecurity startup protecting more than 20,000 applications with its API security platform. It sits in front of clients’ apps, analyzes every incoming request, and blocks malicious traffic in real time.

For Wallarm, Evil Martians migrated their large-volume core event pipeline from NATS to Kafka with zero downtime, fixed event duplication in ClickHouse, and built internal tools for API traffic analysis.

Hire Evil Martians

Companies like Tines, Teleport, Daylight Security, and Wallarm, grow with Evil Martians.

Martian project team

![Alexander Baygeldin profile pic]()

Alexander Baygeldin

Backend engineer

![Igor Platonov pic]()

Igor Platonov

Backend engineer

![Andrey Novikov]()

Andrey Novikov

Backend Engineer

![Anton Senkovskiy pic]()

Anton Senkovskiy

Account Manager

Why Wallarm hired Evil Martians

With Black Friday on the horizon, the timeline wasn’t flexible. Wallarm’s traffic was growing across 20,000+ protected applications, and the team needed to migrate from NATS to managed Kafka without pulling engineers off the core product. Wallarm brought in Evil Martians to run the migration.

Engineering teams are often thinly spread, making it impossible for them to step out of their core responsibilities. Hiring isn’t always the right choice. Wallarm needed senior engineers who could jump in and solve these problems fast, whether the pipeline ran at 3,000 or 30,000 RPS.

Part I: cleaning up ClickHouse and removing duplicates

Every user request that passes through Wallarm’s WAAP gets analyzed in the cloud. The system generates a stream of events that were piped into ClickHouse and NATS for storage and reporting. This process was generating duplicates.

For a security product, this isn’t a cosmetic issue. If your dashboard shows phantom attacks or inflated request counts, your customers lose trust in what they’re looking at. Wallarm needed to ensure users had access to accurate data.

During the first phase of our work, Evil Martians handled event deduplication in ClickHouse, migrated some services to NATS, and worked on microservices in Go.

To achieve exactly-once semantics (EOS) we had to experiment with materialized views in ClickHouse to filter duplicates without creating bottlenecks. The goal was to maintain guarantees about event uniqueness while preserving the throughput the system needed to keep up with incoming traffic. Removing duplicates allowed Wallarm to stop the EOS issue and help improve end user satisfaction.

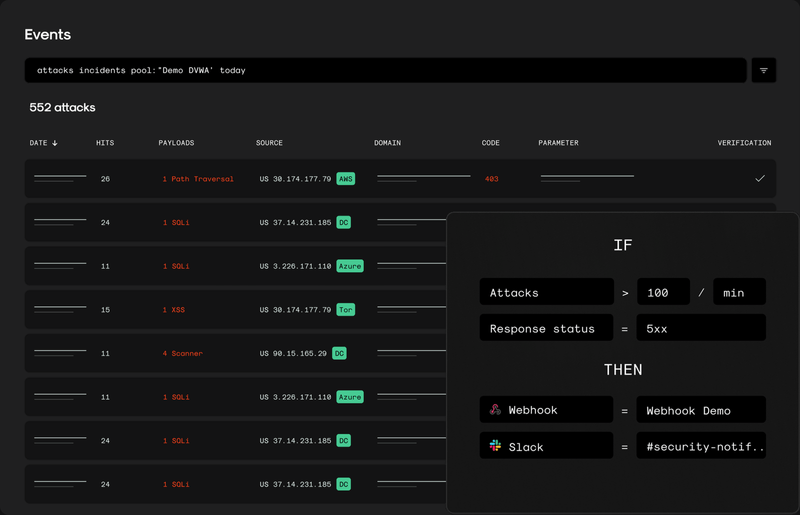

Wallarm’s events user-facing view

Part 2: migrating NATS to Kafka in two months, building internal libraries, and analyzing API traffic

The system pressure

Wallarm’s traffic had outgrown the NATS-based pipeline. The system needed more headroom to keep up with a growing customer base. The Wallarm team decided to migrate to Kafka. This migration covered dozens of topics, with around 30k RPS on the busiest topic and roughly 2,500–3,000 RPS per vCPU.

The migration to Kafka

Evil Martians completed the migration of the large-volume core event pipeline to Kafka in two months, and with zero outages. The new system is in production, handles peak loads of 30k RPS, and has room for further scaling.

Building internal tools

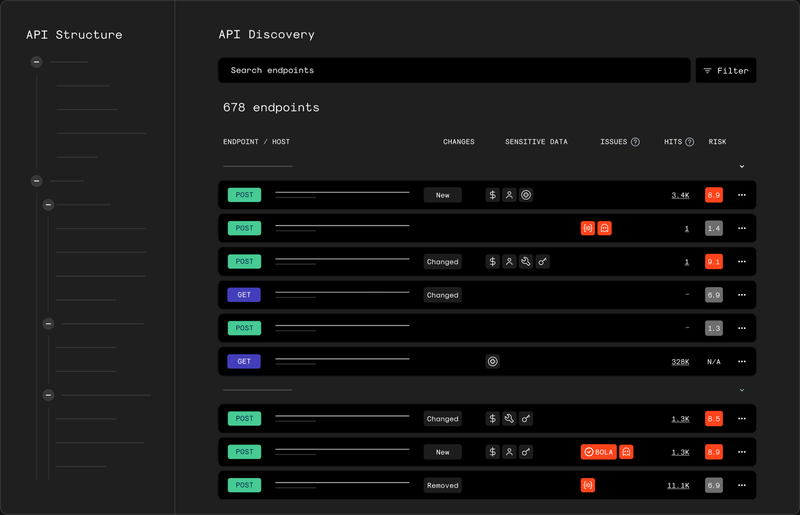

Wallarm’s user-facing API discovery page

After the migration, our team continued working with Wallarm’s API Discovery team, the ones responsible for analyzing API traffic to identify endpoints, understand what they do, and assess the risks associated with them.

To improve developer experience, Evil Martians built an internal library to help other Wallarm teams apply URL parameterization to raw paths and endpoints. Essentially, we extracted the existing logic that the API Discovery team used internally and made it possible for other teams to use it as well. The library is in early stages, but it’s already saving time.

Reconstructing business flows from raw traffic

We also built a Python-based pipeline for Wallarm’s API Discovery team to analyze HTTP session data and reconstruct business flows from raw traffic.

In raw API traffic, a single business action is usually split across many low-level requests. Our goal was to turn that noisy traffic into structured, explainable flows by analyzing repeated request sequences across sessions.

Our pipeline parameterizes raw URLs, maps endpoints to business objects, and uses those higher-level objects to roll low-level traffic up into domain-level actions. This turns noisy session data into structured, explainable flows. Where it helped readability, we also applied LLM-assisted naming to turn technical flow candidates into clearer business-facing labels.

This feature is still in development, but it already produces structured flow artifacts from traffic and gives Wallarm better behavioral context for future product capabilities:

- Faster understanding of how a protected application actually behaves

- Less manual analysis of raw sessions and endpoints

- More explainable output for future UI and reporting

- A stronger foundation for tying security events to business context, not just isolated endpoints

What Evil Martians shipped

For Wallarm, Evil Martians delivered a zero-downtime Kafka migration, ClickHouse deduplication, an internal developer experience library, and a Python pipeline that turns raw API traffic into structured business flows. And, we were able to do it in a matter of weeks.