From 0 signal to 128 cold signups: product validation on a $2K budget

Building a product is one thing; knowing someone is willing to pay for it is another. After shipping Thicket’s marketplace in 2 weeks, Evil Martians built a full demand validation infrastructure around it. The results were 36K impressions, 128 cold-traffic waitlist joins, a landing page variant confirmed at 95% statistical confidence, and all on a $2K ad budget. In this post, a repeatable validation playbook with sequenced tools, just a few rules, and founder-operable.

Validation is infrastructure, not a campaign

Thicket is an educational platform where PhD-level experts offer live classes in literature, history, art, and other subjects for curious learners. Founder Nick Constantino, himself a PhD in History, saw a need for humanities learning outside of universities, but wanted us to help validate student demand. While he had a Google Ads account and the instinct that his product was needed, he didn’t yet have a connection between ad spend and product decisions.

Doubling a budget on an unconfigured campaign doesn’t get two times the signal; it’s two times the waste.

Evil Martians approached validation the same way we approach architecture: sequenced by need, not installed all at once. Three analytics tools, added in order:

- Plausible first, for immediate traffic tracking with zero configuration overhead.

- PostHog second, we needed feature flags to understand how users were interacting with the landing page.

- GA4 last, specifically to close the loop with Google Ads optimization.

Each tool was added when it was needed; the idea is that the stack grows with the signal, not in front of it.

Irina Nazarova CEO at Evil Martians

Google Ads as a signal pipeline

Before touching the budget, Evil Martians configured our foundation: conversion tracking wired directly to waitlist joins, audience signals mapped to education demographics, themed taglines across asset groups, and ad variations targeting different user segments.Then we set a rule: don’t touch the budget until ~15 conversions accumulate.

The 2x spend produced a 2x conversion rate—direct confirmation that the foundation was working, not just that money was being spent.

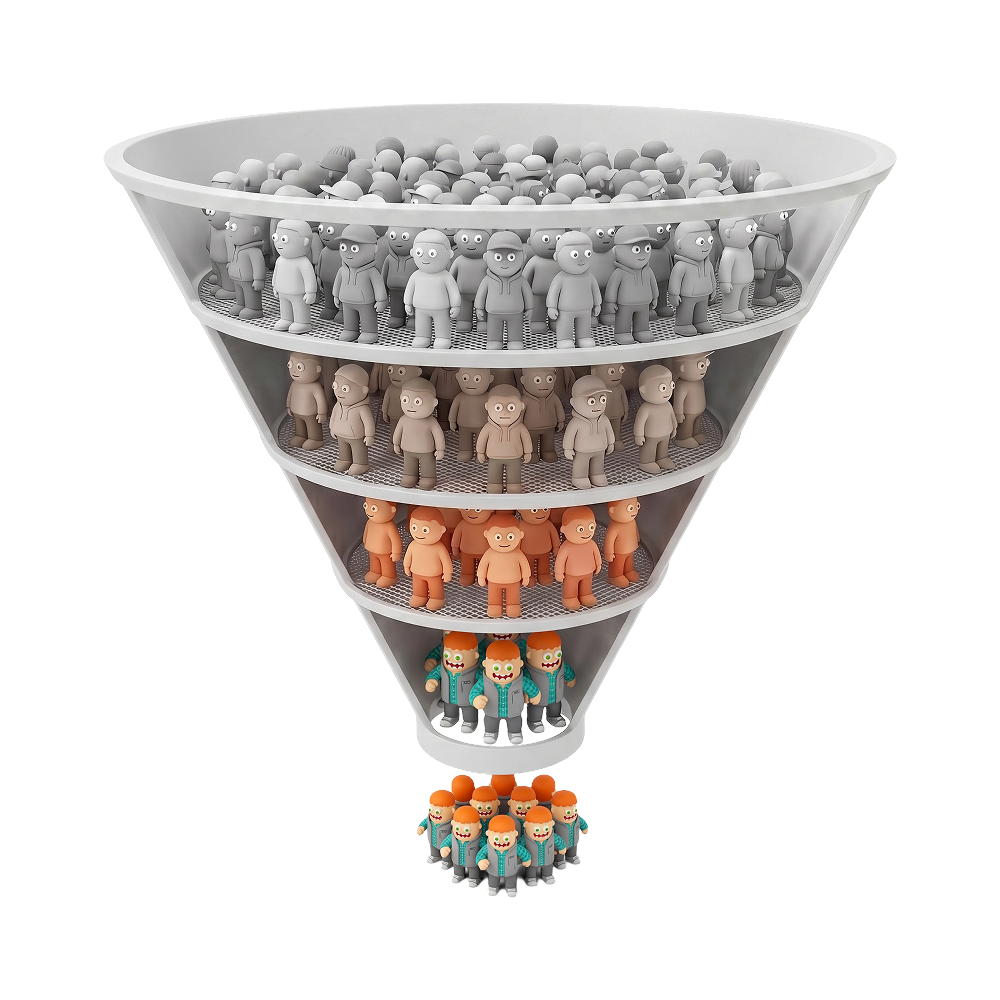

As we ran the campaign, the founder analyzed the search terms triggering ads and excluded the ones bringing clicks without conversion intent. The goal wasn’t just signups, it was testing real demand for discussion-based learning outside of universities. Each exclusion sharpened the funnel: lower wasted spend, higher-intent traffic, cleaner signal about who actually wants this product.

By the end of the learning phase, cost-per-acquisition was below the target set at kickoff, leaving real room to scale.

A/B testing with cold traffic

Evil Martians’ testing discipline followed three rules:

- One variable per experiment

- Every variant previewed in Storybook before deployment

- Statistical analysis run by PostHog at 95% confidence

We tested the title, then the hero asset. Testing was done in this sequence, and never in parallel. 1,400 views over two weeks gave us enough volume to find a real winner rather than a directional hint.

The winning headline focused on student outcomes rather than platform features. This is the kind of signal that only cold traffic can produce—people with no prior relationship to the product, no pre-existing goodwill, and no reason to be generous told us the only message that actually resonated, which is something warm audiences can’t give you.

Scaling a winning signal

With the A/B winner confirmed and a stable cost-per-acquisition from Google Ads, the same playbook expanded to other advertising platforms. We ported the winning headline and hero asset from the A/B test, then rebuilt the targeting layer: interest stacks for adjacent education platforms, lookalikes seeded from Google Ads converters, and creative variants tuned for feed scroll instead of search ranking.

The result was a clean division of labor across multiple channels. Google Ads delivered price discovery with a stable cost-per-lead and a baseline understanding of who actually pays attention to the offer. The other advertising platforms scaled what Google validated, pushing waitlist volume past the ceiling that search volume alone could deliver.

Three feedback channels

Waitlist joins told us people were interested. They didn’t tell us what those people would pay, what they couldn’t find, or whether they’d engage before the product existed. We built three distinct feedback channels to answer those questions.

Willingness-to-pay signal: Price tags placed on production features in the live platform, giving us real behavioral data on pricing sensitivity before any purchase flow was built.

Unmet demand: A topic suggestion banner surfaced to users who reached the platform and didn’t find what they were looking for—turning a dead end into a research asset.

Engagement depth: A scroll-triggered survey that fired based on user behavior, not a timer, capturing responses from users who were genuinely engaged rather than bounced.

Each channel maps its specific business question. The founder then supplemented these insights with a large-sample external survey. Taken together, these gave the founder a complete, evidence-based picture of who his users are and what would actually make them stay.

Founder empowerment

The real deliverable wasn’t the pipeline itself, but orchestrating it and handing it off so that the founder was capable of independently running it. By the end of the engagement, Nick was operating his own refinement loop on the ad campaigns and testing warm channels on the same infrastructure.

Evil Martians built a system so we could leave; if we create dependencies, we haven’t done the job. The founder should own this–and he does.

Results at a glance

| Before | After | |

|---|---|---|

| Product | Mocks and assumptions | Marketplace tested with a real teacher in 2 weeks |

| Cold-traffic validation | None | 128 waitlist joins |

| Ad pipeline | Unused account | Tuned funnel with term exclusions and audience refinement |

| A/B testing | None | Winner confirmed at 95% confidence, 1,400 views |

| Budget response | Unknown | Doubled after 12 joins, kept scaling |

| User feedback | Zero channels | 3 channels: pricing, surveys, banners |

| Brand asset iteration | ~1 week manual | 5 variants in 2 days, automated |

| Component iteration | Hours per variant | 10 minutes per variant in Storybook |

| Cost per acquisition | Unknown | Below target, with room to scale |

The cold truth and the takeaway

This cold traffic was proof that actual people, and moreover, strangers, care about Thicket’s live humanities seminars. They found an ad and decided to join.

Now, the same infrastructure that measured cold-traffic conversions measures warm channels. Email campaigns to engaged lists, co-marketing partnerships with education platforms are all plugged into the same funnel, the same conversion tracking, the same analytical stack. Nothing gets rebuilt!

This validation playbook is repeatable for any project where you need signal before you scale. The tools are sequenced, the rules are few, and founders can run it on their own.